I had successfully (maybe with an asterisk or two) kept a plastic tub roughly at a stable temperature. On to plastic tub v2! This is getting into mid-2018, and I’m starting to have a rough sense of things but for the most part I don’t know anything. Still using an ESP8266 and still using the Arduino wrapper. By this point, my Arduino program is getting pretty unwieldy, but it’s still less than 1000 lines. I had no idea how Arduino programs generally look, so it was pretty stream-of-consciousness with plenty of direction changes.

The original plastic tub had been scrapped (something about a giant hole melted in the side?), but luckily I had another. It was identical, but I’d learned my lesson. Ensuring the heater doesn’t burn anything down is priority number one. In the longer term, it was clear an incandescent light bulb wasn’t going to be the best heater for a number of reasons:

- they get too hot (over 100°C easily)

- they’re a weird shape, as heat is a byproduct and not the main use

- they’re just not easily available anymore (that’s weird to say…)

- they put off a ton of light, which doesn’t necessarily have an incubator-related downside, but it dissuades me from looking at the thing

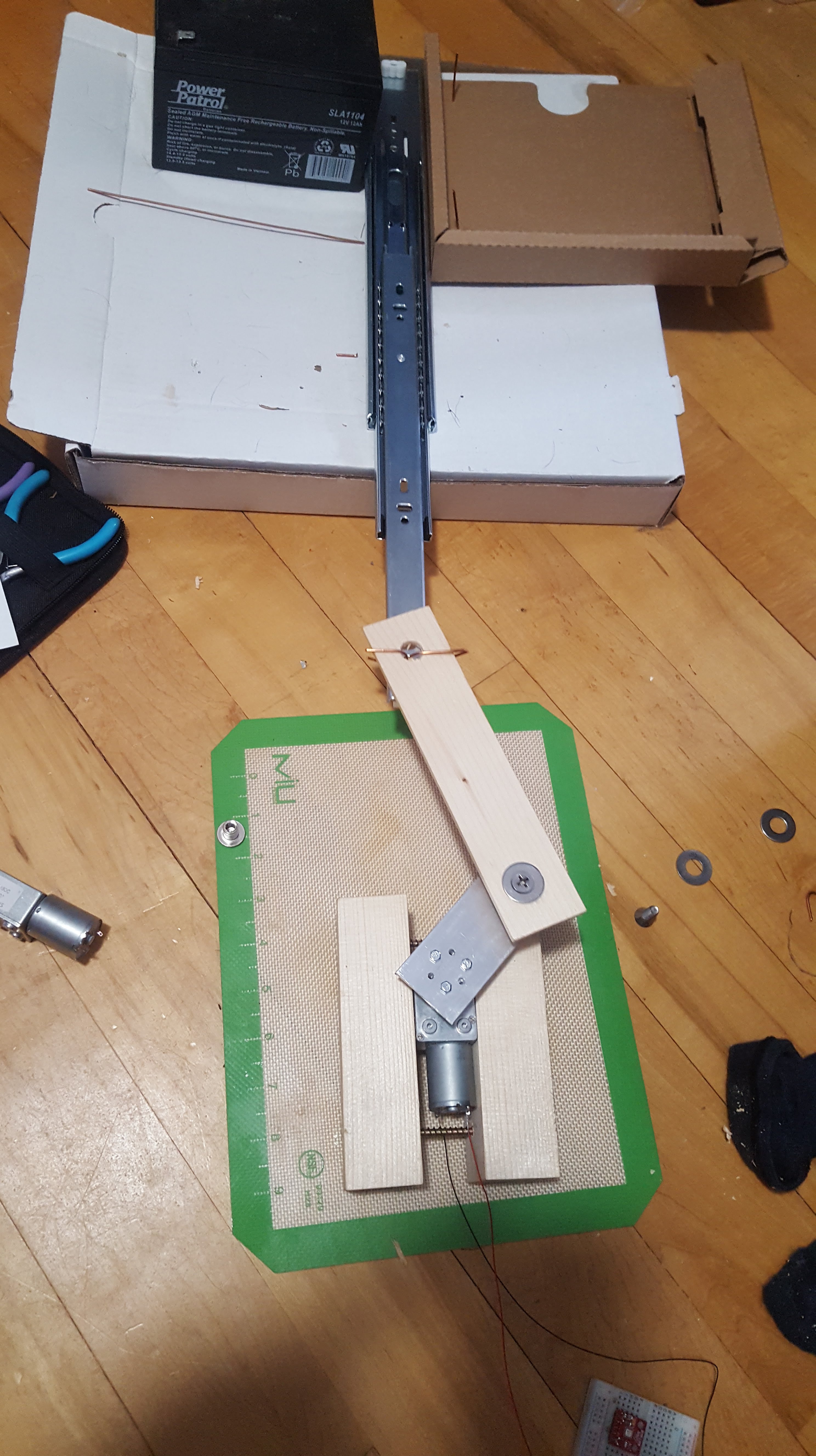

I ordered a bunch of different styles of heaters, but they wouldn’t be showing up for a long time (almost everything I get is the cheapest version of itself and comes from China in 1 – 2 months). While a light bulb wasn’t ultimately going to be the goal, it’s what I had available to me. To make it safer I picked up one of these from the local home improvement store:

This was dramatically safer than my old light bulb holder, as by its very nature things are less likely to be close to it. I still just rested it on the bottom of the tub, but now it could be centered to maximize the distance from the sides.

When things go pear shaped – software side

Note that at this point while the project is keeping a relatively “fixed” heat, that’s pretty secondary. So much other stuff is going wrong that the fact it’s intended to be an incubator is virtually irrelevant. At this stage, I ran into two significant problems. First was a nefarious software problem. I had blown past my previous record run of ~16 continuous hours of operation and was closing in on 30 hours before catastrophic malfunction. For some reason, after just under 30 hours my microcontroller would reboot.

I’ve been working in Java for years, and I’ve honestly never really thought about memory. Even when manually allocating memory in school, it wasn’t for anything real – if you got it wrong you lost marks or something. You might be able to guess where this is headed – I had a very slow memory leak. I was pointed towards the possibility of a memory leak with the overly kind help of Internet stranger Rob Tillaart (really, an absolute gem who just so happened to be the author of a library I was using).

I started logging the free memory on the device. After 5 or 10 minutes, it had stayed rock steady, so I was quickly dismissing that as the culprit. Then the unthinkable happened. I checked back a couple hours later and the free memory had dropped. Not by a lot, but by some. That meant that something somewhere was allocating memory and not freeing it, even though I didn’t intentionally do so anywhere.

Reviewing my logs, it looked like roughly every 10 minutes it leaked 240 bytes. Always 240 bytes. Coming from web application development, who even cares about 240 bytes?! Turns out I do, in fact I very much do. With ~41000 bytes free on the ESP8266, that means every ~28.8 hours it would hit an out of memory error and restart. With some data, I was able to quickly narrow in on the issue. Every time I send the NTP packet (once every 10 minutes) it leaked 240 bytes. I never actually looked at the root cause, but it seemed like sending UDP data but not reading the responses (I was ignoring some content I didn’t care about) would keep the received memory allocated.

Fixing that code up, the memory leak was resolved. Lesson learned: always track memory consumption in development!

When things go pear shaped – hardware side

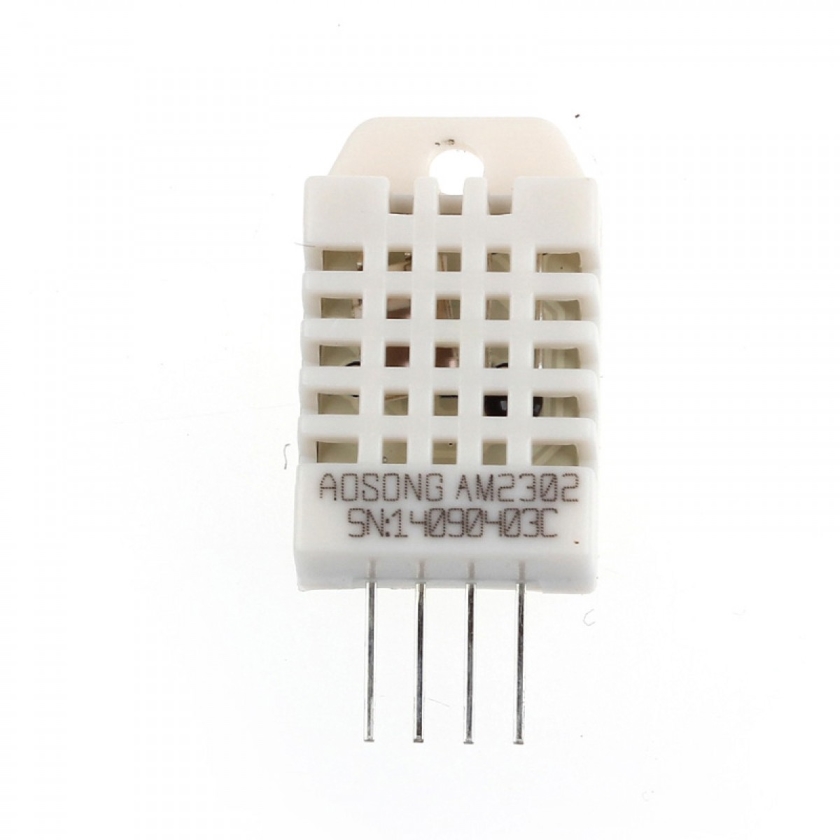

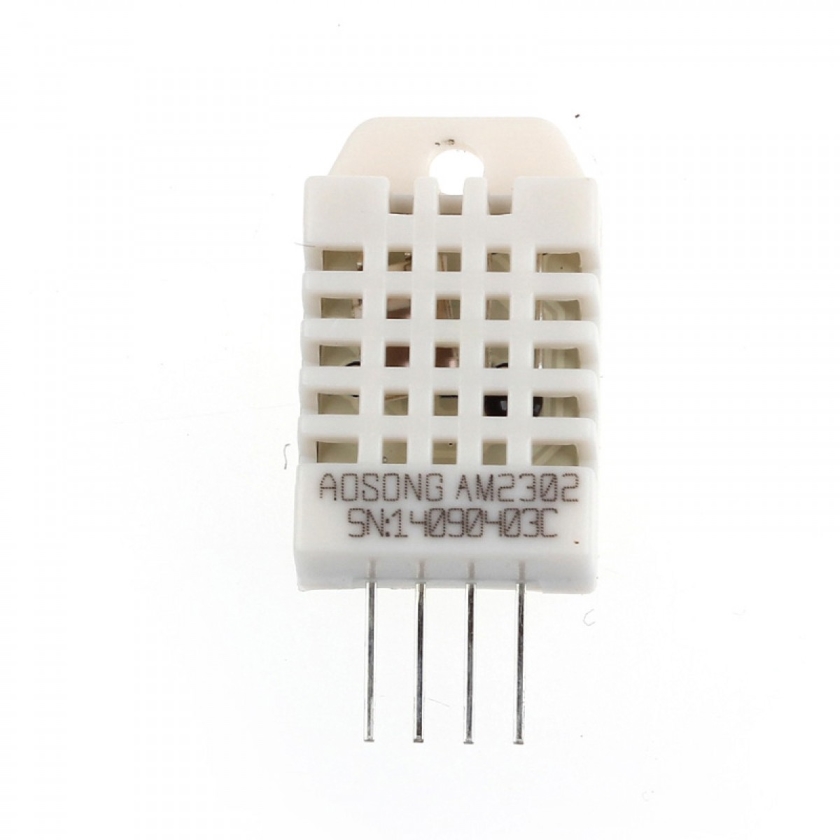

Clearing the ~29 hour memory leak induced hard limit for execution time let me run into my next failure. I was using DHT22 temperature and humidity sensors. If you don’t know what you’re doing and you’re in the Arduino world, these are ubiquitous. They’re also pretty much trash, in my experience. They work a little but there’s just no reason for them to exist when other sensors exist. The sensor can be had for ~3$ a piece, has a ±0.5°C temperature accuracy, and a 2 second sensing period. They’re not objectively terrible, and I’m sure someone more competent could make them work more reliably, but there are just so many alternatives that either have better performance, better price, better reliability, or all of the above.

My scheme was this: I didn’t want to actually go to any effort to calibrate the sensors, so if I put a bunch of them in, discarded obvious outliers and averaged the remaining values I was hoping I could get some reasonable consistency. I was reading each sensor every 2 seconds, sending the reading off to a server, then repeating. That’s when the sensor failures started to kick in…

Following another recommendation from Rob Tillaart, I tried swapping the original 10k resistors for 4.7k and started tracking specifically what the failures were. These represent the interactions with a given sensor. Here’s a snapshot:

Total OK Checksum error Timeout Unknown

( 10k) 1: 48950 48929 21 0 0

( 10k) 2: 48950 48354 596 0 0

(4.7k) 3: 48950 46612 2336 0 0

(4.7k) 4: 48950 47447 1503 0 0

As you can see, it’s just shy of 50000 reads across 4 sensors – 2 with 10k resistors and 2 with 4.7k. The best sensor had a ~0.04% error rate while the worst performing sensor had a whopping 4.77% error rate! The obvious conclusion here is this is a hardware implementation/user issue and not a sensor issue, but it’s still a pain.

Ignoring the checksum errors (which just manifest as a failed read), the real issue is that 100% of the time the sensors would lock up and timeout. That is to say, at an arbitrary seeming time, every DHT22 sensor in every configuration I tried eventually locked up. I had purchased a few different form factors and manufacturers as well, so this seemed like a fundamental issue somewhere.

While I’d absolutely shattered the 28 hour continuous run record, sensors consistently started to drop off one-by-one and I couldn’t get any reading much into the second day. The program still ran fine, just not temperature or humidity data coming in. After floundering on this issue for a week or so, I ended up just cutting the power to the sensors when a timeout was detected. This gave them a chance to reboot, and seemed to work robustly enough to move forward. Again, I didn’t discover the root cause of this and enough changed over time that I’m not sure specifically what the issues were, but in my opinion in decreasing likelihood:

- using a breadboard to hook things up (these are the cause of SO MANY of my problems)

- using 3.3V logic – I got a logic level shifter eventually

- timing interference from WiFi or other ESP8266 shenanigans

- the ESP8266 itself – I got a bunch of ESP32 modules and have been using those since

Lessons learned:

- always track your memory usage during development, memory leaks can be slow and come from unexpected places

- just… don’t use breadboards. If anything at all is going wrong and you’re using a breadboard, assume the breadboard is the issue until proven otherwise

- even sensors can fail! Robust software/electronics design has to accept that failure (e.g. by not burning anything down) and deal with it (e.g. by rebooting the sensor)